It’s 2026 and AI-assisted programming is more popular than ever. From AI development augmentation to completely vibe-coded products, it’s clear AI coding tools are here to stay. So the question becomes in this new era, what does responsibly AI software development look like in 2026?

The Need for Responsible AI Coding

Over the past few years, we’ve seen AI-assisted coding become more than a trend, with people from indie devs to multi-national companies moving towards an AI-powered future. But we’re starting to see some worrying trends; there some a correlation between AI coding tool use and platform outages. Whether or not AI is the cause, we should critically think about when, how and why we use AI to make.

This risk is combined with the fact that AI models are fragile — one moment they’re providing great code after great code, but the next they’re deleting your production database. It’s clear there’s a need for some responsibility over AI-written code, and leaving that to the models themselves is fraught with danger.

As developers, we have a duty to use all tooling — including AI tooling — responsibly. So let’s look at some responsible coding practices when using AI so you can balance the benefits of the tools and the risks they pose.

Coding Responsibly with AI

1. Take responsibility for AI Outputs

Responsibility and governance are becoming big problems in the AI space, and that’s no different in with software development. When AI code breaks something, whose responsibility is that? When an AI agent brings down production, how do you ensure it doesn’t happen again?

The key with responsibility isn’t to play the blame-game, but to trace a problem back to its cause and work towards fixing the root problem. If your root problem is “the AI got it wrong”, what kind of action plan can you make? Issues start to arise when there’s no clear path back to any human action.

Blaming AI for problems is an easy way to skirt around the edges of responsibility. Nobody learns; no systems improve; nothing changes. You just wait for a new model and hope it doesn’t happen again.

The legal question of responsibility for AI or autonomous actions is still largely unanswered. But for now, as a dev, team or organisation, the best way to retain accountability when using AI is to apply the same rules as if you wrote the code yourself, or did the action yourself. So when committing AI code, or setting up an agent, treat it like your responsibility.

2. Write tests for everything that matters

In 2026, tests are still a core tool in the review process — and they aren’t going away any time soon. Writing detailed and comprehensive test suites already helps ensure that your systems:

- Maintain their existing functionality and new features are added.

- Do not break any existing code when refactors are completed.

- Provide a way to ensure complex business logic is correct when new requirements are added.

And all of this is true for AI code, with one crucial addition: don’t over-rely on AI to write your tests. You should also avoid prompts like “generate some tests for this code” as that can lead to models writing Tautological Tests — when it tests against what it actually does rather than what it should do. Good tests are written from the spec, not the code, but AI models can be susceptible to errors — just like with humans.

If an AI misunderstands a complex logic flow, it may write tests which also contains that mistake, leading to false-positive passes. Instead, you should be writing tests based on known inputs and outputs — maybe your client has given you an example to test against, or you can manually work out some cases by looking at the spec. Then, use AI to check your test against the spec, and investigate any disagreements it has.

3. Never skip the human review process

Just because AI can write code that looks right, it doesn’t mean it is right. Depending on the model, language, context and third-party integrations, you might get really well — or poorly — written code. This unpredictability is why AI code should never skip the human review process; you might instead choose to increase reviews. This could look like different things, but you should include where possible:

- Reviewing the code yourself: Blindly taking code from any external source and hoping it’ll solve your problem is a recipe for disaster — and that’s no different with AI-generated code. Before committing anything into your codebase, you should be reviewing the code, fixing its problems and improving its weaknesses. Just like you would with your own code.

- Have a set of more senior eyes check: Having another pair of eyes check over your work is always a good thing, no matter if you’ve used AI or not. But if your confidence in the AI code is lacking, bringing in somebody more experienced might be the play. They can provider greater insight with the context and nuance of your codebase to offer advice.

- Run QA testing: While this is again something you should consider for any larger changes, it can also be worthwhile for any complex AI changes which needs validation. The best QA testing is not just testing the happy path, but actively trying to break your implementation.

4. Let AI help you grow

Discussions about AI-assisted coding often fall down the path of what AI can write or do for you, but it doesn’t always have to be that way. Instead, ask yourself what AI can teach you. With modern models having access to the ever-growing expanse of the internet, AI can surface the information you need.

Are you hitting an edge-case that doesn’t seems to be documented somewhere? Getting non-descriptive compilation errors and you don’t know where to start debugging? Do you just not know what a certain block of code does and need an explanation? AI might be able to help you understand.

This doesn’t mean AI will always get it right, so verification of what it tells you is paramount. AI answers might introduce subtle pieces of incorrect information, like having a decimal point in the wrong spot or inverting a value, or even contain larger factual errors. This small bit of time used here if often well worth it when you encounter the same issue elsewhere down the track.

5. Know when not to use AI

Just because AI can be used for coding doesn’t mean it always should be. Do you have a really complex business logic flow that you have to get right? Are you diving into a system full of two-decades of tech debt and need to tread carefully? It might be better to work slow, make small steps, and leave AI on the sidelines.

There are so many applications of AI that are almost completely positive, like generating boilerplate code, reminding you of syntax or finding you documentation you need. But AI fundamentally struggles with nuance, especially if it would take you more time to explain it to the model than to just do it yourself.

And that’s ignoring that AI models can suffer “a complete accuracy collapse” beyond a certain complexity limit.

In high-stakes environments, where you cannot afford to get anything wrong, code generation can be more of a hinderance than a helper, especially if you lack the experience to critically analyse what the AI is telling you.

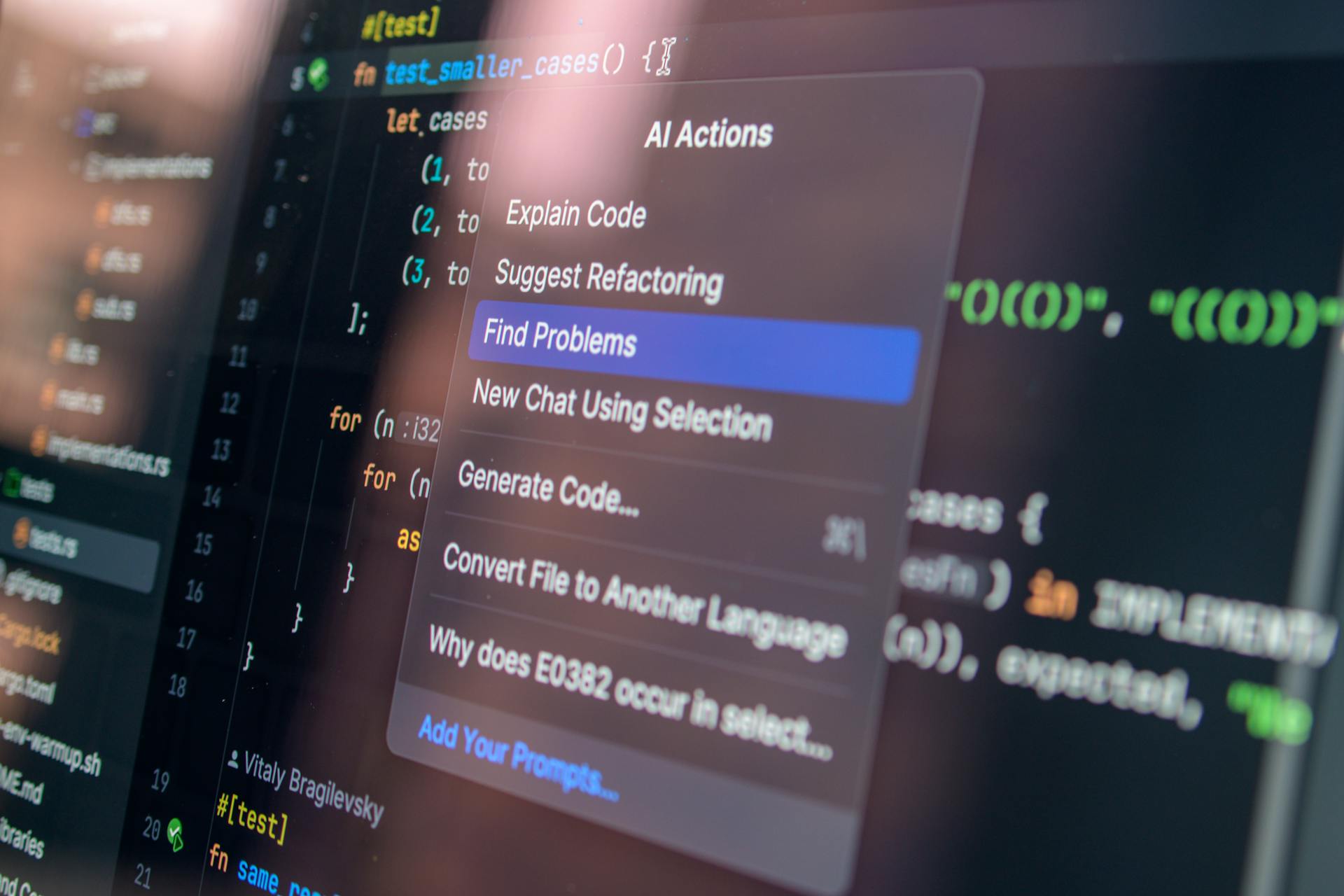

This is a perfect time to swap roles: let AI review your code. That way, your squishy human brain can first understand what’s going on before having the mechanical brain find potential errors. The key: never intrinsically believe what AI says; always investigate anything you’re unsure about yourself.

The Future of AI Coding

We’re now moving into the third era of AI, where agents will be doing much more work. Only time will tell how successfully this will be, but with reduced oversight, increased complacency and fragile models, the warning signs are there for caution.

At the end of the day, we as developers are building customer experiences. And if the quality of what we make starts to drop, people will notice. We’re only at the beginning of having entire projects being AI written; we’ll have to wait and see the impact on software quality and maintainability in the long run.

If you need a software solution built right, FONSEKA can help! We take the time to get things right, deliver high-quality software and maintain your product so it serves you into the future. Chat to us today at https://fonseka.com.au/contact